Machine Learning with Python - Resources.Machine Learning With Python - Quick Guide.Improving Performance of ML Model (Contd…).It also demonstrates a trade-off between sensitivity (recall and specificity or the true negative rate). ROC curve shows the true positive rates against the false positive rate at various cut points. F1 score is a weighted average score of the true positive (recall) and precision. F-score helps to measure Recall and Precision at the same time. So to make them comparable, we use F-Score. It is difficult to compare two models with low precision and high recall or vice versa. Precision = TP/Predicted: YES Prevalence: How often does the yes condition actually occur in our sample? TNR = TN/actual no Precision: When it predicts yes, how often is it correct?

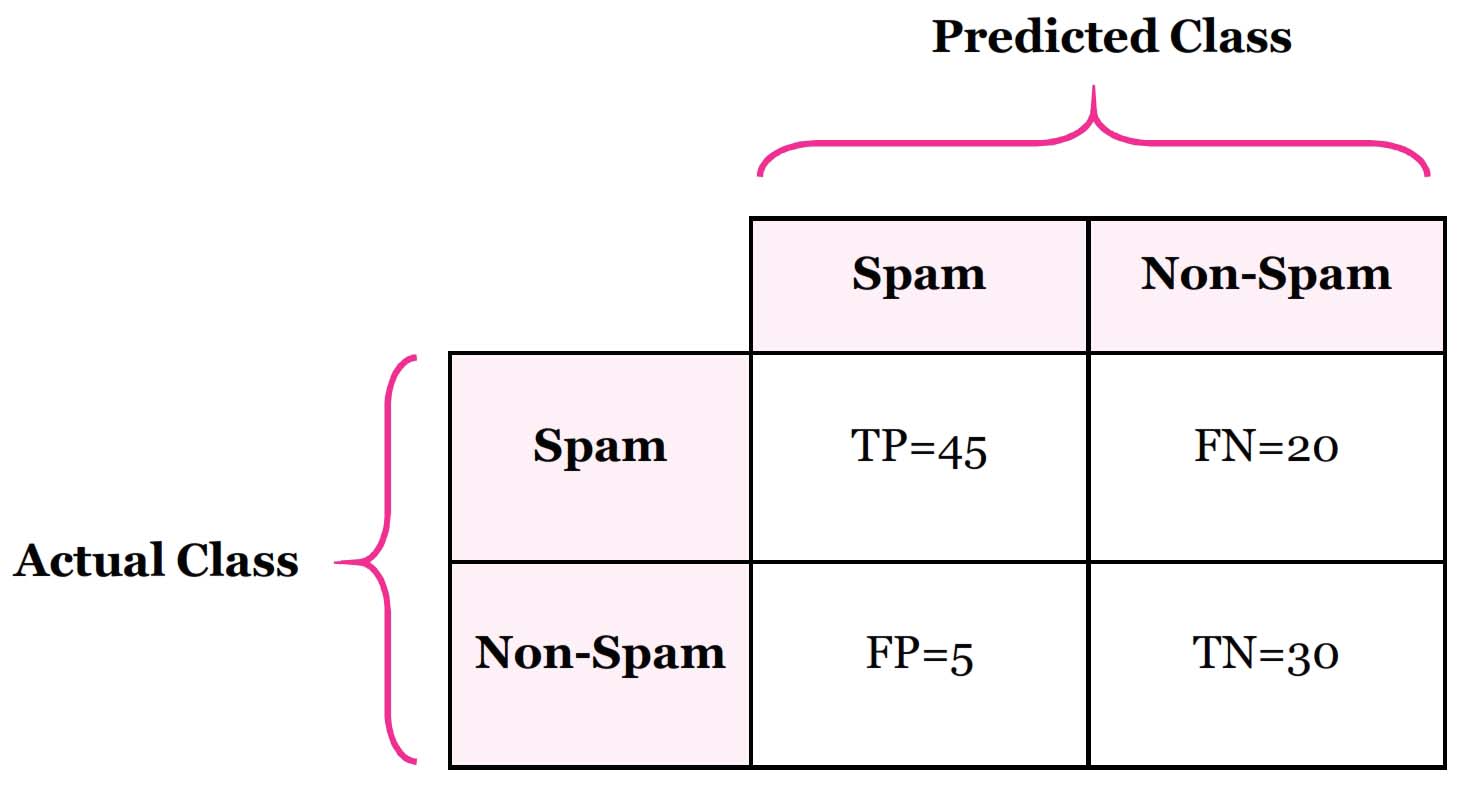

TPR or Recall = TP/actual yes False Positive Rate (FPR): When it’s actually no, how often does it predict yes?įPR = FP/actual no True Negative Rate (TNR): When it’s actually no, how often does it predict no?. It is also known as “Sensitivity” or “Recall” Misclassification rate = (FP+FN)/total True Positive Rate (TPR): When it’s actually yes, how often does it predict yes?. Accuracy: How often is the classifier correct?Īccuracy = (TP +TN)/total Misclassification Rate: Overall, how often is it wrong? It is also called “Error rate” Just incorporated into our confusion table and added both row and columnsīelow terms are computed from the confusion matrix for a binary problem. It is also known as a “Type 1 error”įalse Negatives (FN): We predicted no, but they are actually leaving the network (churn). True Negatives (TN): We predicted no, and they are not leaving the network.įalse Positives (FP): We predicted yes, but they are not leaving the network (not churn). True Positives (TP): These are the people in which we predicted yes (churn), and they are not leaving the network (not churn) Let’s see the important terms associated with this confusion matrix with the above example In reality, 155 customers are churn, and 45 customers are not churn.Out of 200 customers, the classifier predicted ‘yes’ 160 times, and ‘no’ 40 times.The classifier made a total of 200 predictions (200 customers' records were analyzed ).'Yes' means churn (leaving the network) and 'No' means not churn (not leaving the network). There are two possible predicted classes: ‘yes’ and ‘no’. Our target variable is churn (binary classifier). It’s a simple table which helps us to know the performance of the classification model on test data for the true values are known.Ĭonsider we are doing telecom churn modelling. This is where the term Confusion matrix comes into the picture.Ī confusion matrix is a performance measurement technique for Machine learning classification problems. Better the effectiveness, better the performance of the model. Wait Wait Wait! How can we say it’s an outstanding model? One way we can say this is by measuring the effectiveness of the model. What’s happening in our day to day modelling?ġ) We are getting a business problem 2) Gathering data 3) Cleaning the data 4) Building all kinds of outstanding models, right? Then, we are getting output in probabilities. In this article, I will try to explain the confusion matrix in simpler terms. The concept behind the confusion matrix is very simple, but its related terminology can be a little confusing. Mathematical contributions to the theory of evolution (PDF). The confusion matrix was invented in 1904 by Karl Pearson. Also, I tried to find the origin of the term ‘confusion’ and found the following from I was confused when I first tried to learn this concept. Confusion matrix is a famous question in many data science interviews.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed